Search engines are consistently changing their algorithms, and with each change can come updates to their ranking factors and best practices. As a consequence, some websites are steady performers even when such updates are implemented.

For such sites, the cause seems to be solid technical SEO, as opposed to clever hacks or trend chasing.

Reliable technical SEO means building a site that search engines can crawl, interpret, and trust. This includes remaining resilient to the changes and updates that search engines consistently put into place.

Optimal technical SEO will support all of the other SEO efforts and will consistently protect long-term site visibility. This article will cover the basics of technical SEO, the reasons these fundamentals will stand the test of time, and the best practices for implementation.

Timeless Technical SEO: Why It Matters More Than Ever

SEO continues to change. Search engines evolve. Yet, they are still fully dependent on technical SEO. Search engines still need to examine quality. They still examine technical signals to evaluate access and interpret quality.

No matter how great the content, if it doesn't rank, it fails.

Technical SEO creates a framework that allows sites to scale content, earn links, and survive Google updates without constant problems.

Mastering the Basics of Technical SEO

When it comes to technical SEO, the most important thing to optimize is the technical performance and the search engines themselves. We need to first evaluate and understand how Google and other search engines work.

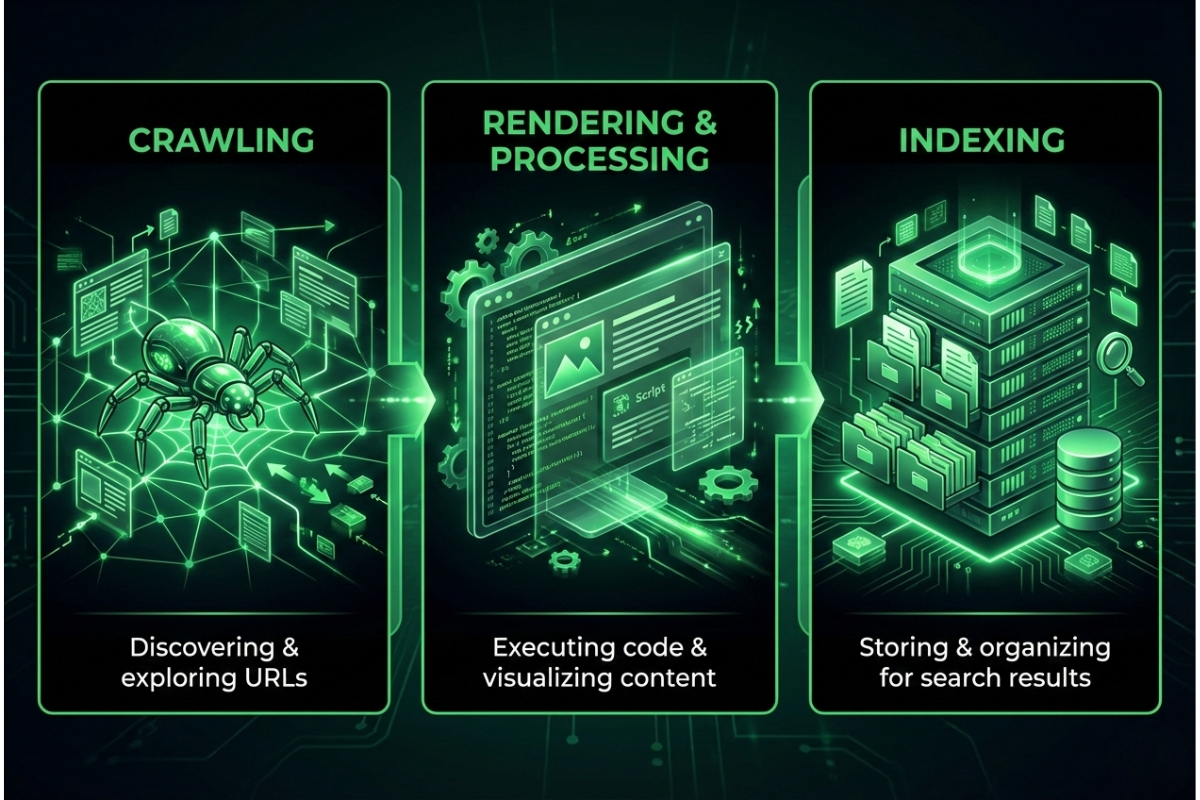

There are three major components of how search engines work.

1. Crawling

So, how do search engines crawl websites? Well, their bots discover URLs by following links, sitemaps, and known URL patterns.

Crawl budgets are limited, especially for large sites, so efficiency matters.

2. Rendering and Processing

Pages are rendered, JavaScript is evaluated, and structural signals are assessed. This step determines whether content is fully accessible or partially invisible.

3. Indexing

Only pages that meet quality and accessibility thresholds make it into the index. Many crawlable pages are never indexed.

This is where crawlability and indexability in SEO become critical. A page can be crawlable but blocked from indexing, or indexable but rarely crawled due to poor internal signals.

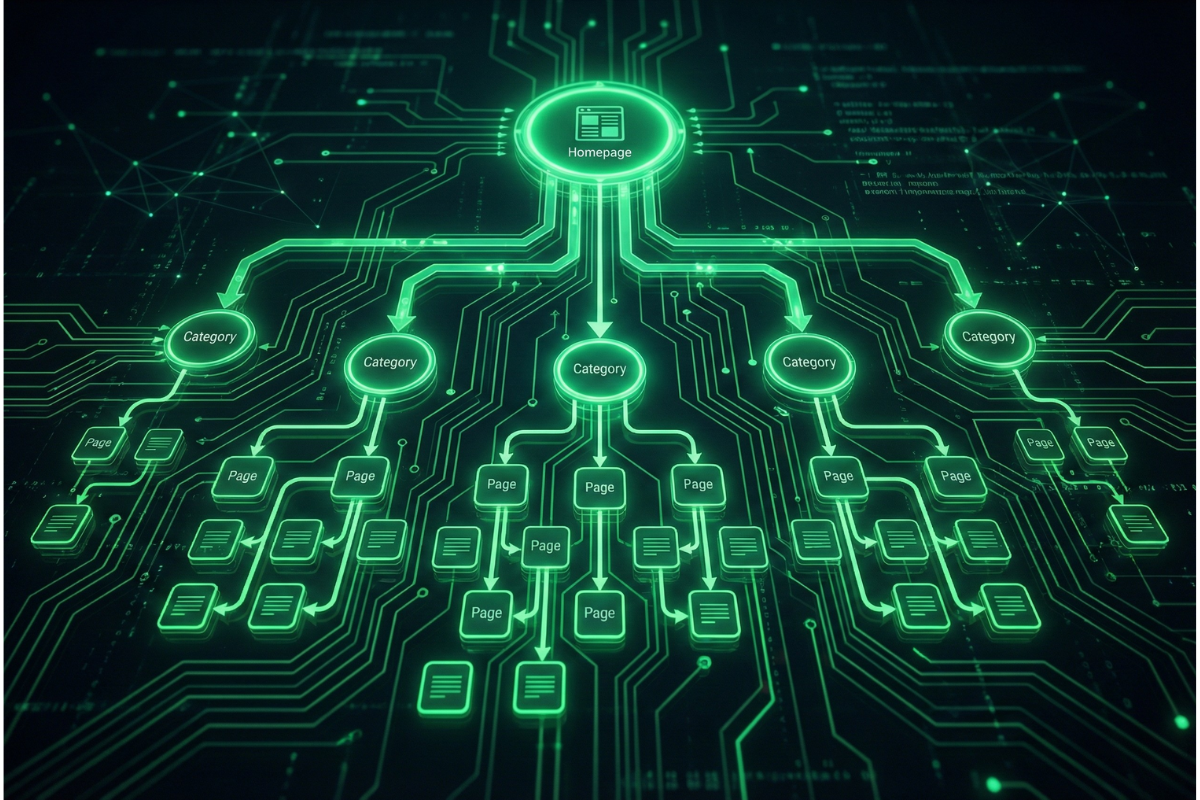

Site Structure for SEO: The Long-Term Backbone

Having a clear and functional architecture is one of the most lasting ranking advantages a site can have.

Why Site Structure Still Wins

Having a well-structured site allows search engines to value your internal links better to understand value, relationships, and topical depth. A well-structured site for SEO allows:

- Important pages are prioritized for crawling

- Link Equity moves in a logical manner

- Content topics are easy to understand

When the structure is messy, search engines waste resources and miss context.

Characteristics of a Durable Structure

A site structure for SEO that is meant to last will often include:

- Clear category relationships to subpages

- Shallow depth (important pages are within 3 clicks)

- URLs that are structured the same and do not change often

Long-term crawl inefficiencies are often a result of frequent restructuring or heavy dependence on filters and parameters.

Crawlability vs. Indexability: The Distinction That Breaks Sites

A site can appear technically sound, but is likely to be misaligned when it comes to crawlability and indexability in SEO.

Crawlability

Refers to search engine accessibility to a web page, which is often impeded by a robots.txt file, broken internal links, or JavaScript navigation.

Indexability

Concerns the possibility of a page being indexed after a successful crawl. Index tags, anatomical misconfigurations, and paucity of content get in the way of indexability.

To achieve high crawlability and indexability, content strategies must be aligned with the website’s technical architecture. Relying mistakenly on noindexing important templates or misusing canonical tags can erase a site's progress.

Technical Signals That Earn Trust Over Time

Trust in the SEO site-building practice is earned instead of given, or hijacked. It is earned through the practice’s high consistency.

Page Speed and Stability

Speed is important, and so is consistency. A website that loads quickly and consistently on various devices and networks is trusted more and is, therefore, more engaging.

Clean Status Codes

Trust in a site is degraded by long-standing 404 errors, chain redirects, and soft errors, which often go unsupported. Search engines note these issues and begin to crawl a site more frequently if problems persist.

Secure and Predictable Infrastructure

Trust is built or maintained through the use of HTTPS, stable hosting, and consistent deployment strategies.

These elements represent the basics of technical SEO that rarely lose their importance, even as metrics and tooling change.

Modern Rendering, JavaScript, and Its Challenges

JavaScript, on its own, does not negatively affect SEO, but its misuse creates problems with visibility over time.

Though search engines are able to render JavaScript, they do not do so instantly or uniformly across pages. Sites that overly utilize client-side JavaScript rendering may see more delays to indexing or may have issues with the visibility of certain portions of content.

The best practice still remains plain and simple:

- Critical content and links need to be available in the initial HTML.

- Navigation and pagination should not be rendered exclusively via JavaScript.

- Check rendered output instead of source code.

- This practice, while modern, is still in line with the principles of technical SEO.

Building a Technical Foundation That Lasts

Ageless technical SEO focuses on clarity, consistency, and accessibility.

When you know how search engines crawl websites, and you ensure that their paths are clean, structures are clear, and indexing signals are accurate, you will get stable responses.

The next step is simple: audit the fundamentals, fix what blocks access or understanding, and reinforce what already works. Long-term SEO success is rarely about doing a lot of things; it is about doing things that provide the right foundations and letting them build on top of each other.